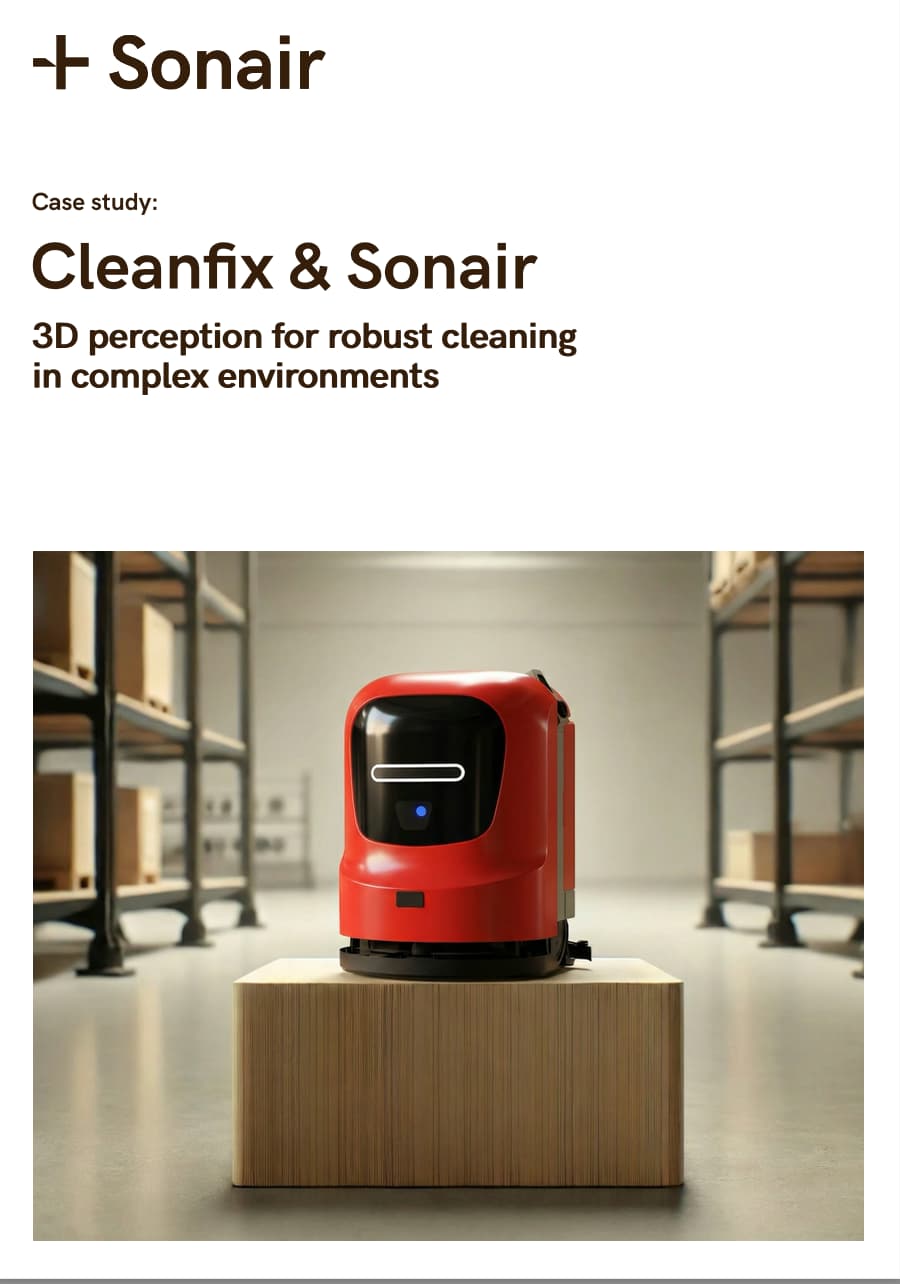

How Cleanfix is advancing the new generation of the RA660 Navi XL cleaning robot with 3D ultrasonic sensing from Sonair.

Autonomous cleaning robots are rapidly becoming essential in commercial and industrial environments, but real-world conditions remain a major barrier to reliable, scalable deployment.

In this case study, we explore how Cleanfix is tackling these challenges head-on with its next-generation RA660 Navi XL, combining robust robot design with a new approach to environmental perception.

At the heart of this evolution is Sonair’s ADAR 3D ultrasonic sensor, enabling true spatial awareness in environments where traditional sensing technologies struggle.

The case study highlights how Cleanfix is achieving more stable, predictable performance through:

The result is not just better obstacle detection, it’s a measurable improvement in operational efficiency. Fewer interruptions, lower maintenance effort, and more predictable robot behavior translate directly into reduced total cost of ownership and easier scaling across fleets.

Download the full case study to learn how Cleanfix and Sonair are redefining perception in cleaning robotics—and what it takes to build autonomous systems that perform reliably in the complexity of the real world.

What is the angular resolution of your sensor?

This concept is not easily definable with ADAR because a pulse is not sent out in a discrete angle. If you are familiar with LiDARs, angular resolution is the angular distance (in degrees) between distance measurements of 2 beams.

This does not apply to ADAR because it does not emit beams.The angular precision is 2° straight ahead and 10° to the sides. The sensor is able to distinguish between multiple detected objects, if the objects are separated by more than 2 cm in range relative to the sensor or by more than 15° from each other.If the two objects are positioned closer than 2 cm or 15° from each other, they will be detected as 1 object.

Precision is a measure of the statistical deviation of repeated measurements on a single object’s position.

What is the maximum of points you have in the point cloud?

The maximum number of points is very rarely a limitation to the sensor’s performance, because the total number of points needed to fully sense a scene is low. The ADAR technology reports 1 point per surface on any object, making the total number of points low. This is opposite to what one might be used to from LiDARs.

The relative sparsity of the point cloud is a fundamental feature of sound-based sensing, but this is not a sensor limitation as the point cloud will always contain at least 1 point per object within line of sight from the sensor.

Can the sensor distinguish between humans and objects among detected obstacles?

ADAR does not do object classification. The sensor is for people and object detection.